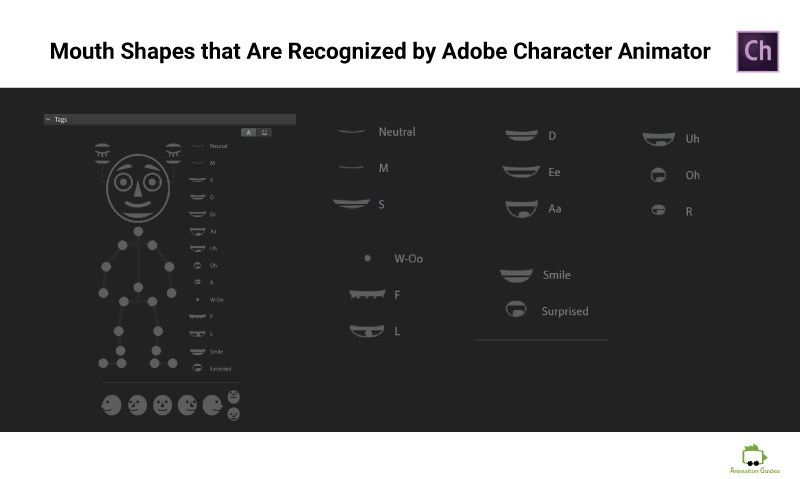

Adobe Character Animator * allows you to create automatic lipsync for your characters. There are different approaches to lipsync and which mouth shapes are required to make an expressive conversation. Adobe Character Animator lets us define a set of 14 mouth shapes that it will automatically recognize and activate based on the camera input, audio signal, and possibly a transcript we provide.

The Main Idea

In this post, I will describe my process of creating a mouth rig. Which is all about creating all the art for the different mouth elements right at the beginning and then just adjusting those to fit different mouth expressions.

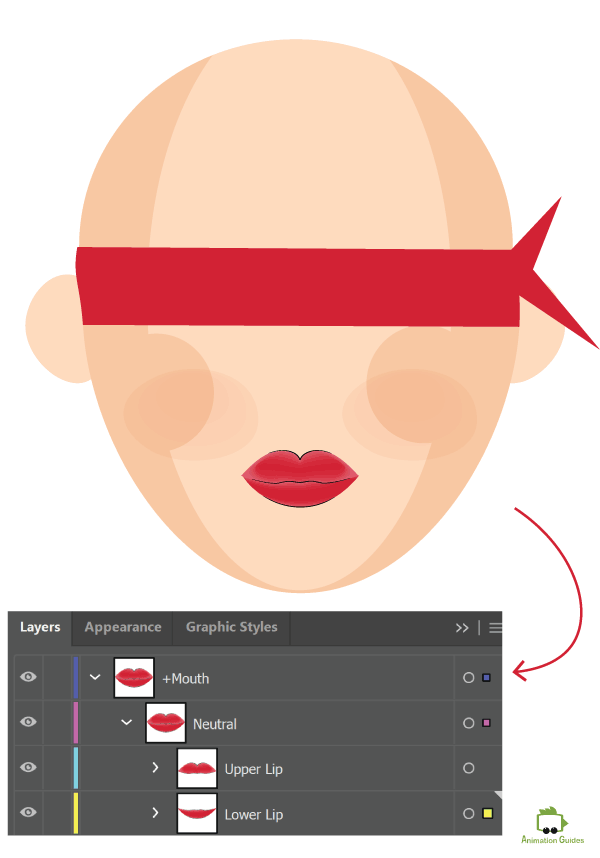

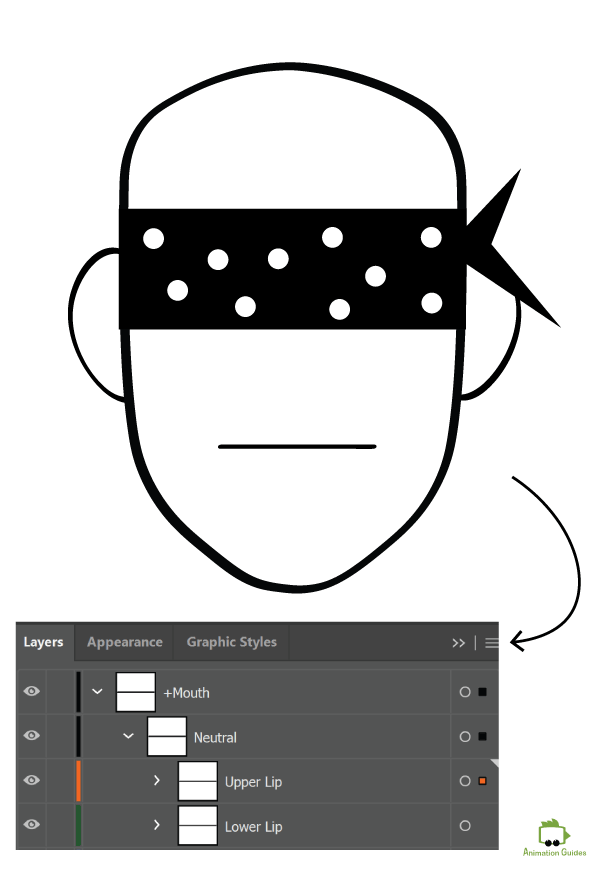

Step 1: Create the Upper and Lower Lips

The first step would be creating the artwork for the lower and the upper lips. Draw the lips to form a closed mouth shape.

The lips you draw can be in any art style you want. Those can be just 2 straight lines to match a simplistic flat vector character or a more detailed and realistic human-like lips.

- Create a Layer with a name +Mouth

- Create a sub-Layer with a name Neutral

- Place each lip on a separate separate sub layer below the Neutral Layer.

Do it for each head view your puppet will have. So assuming you will be using front, 3/4 quarter, profile views. You will need to create the lips for each of those views.

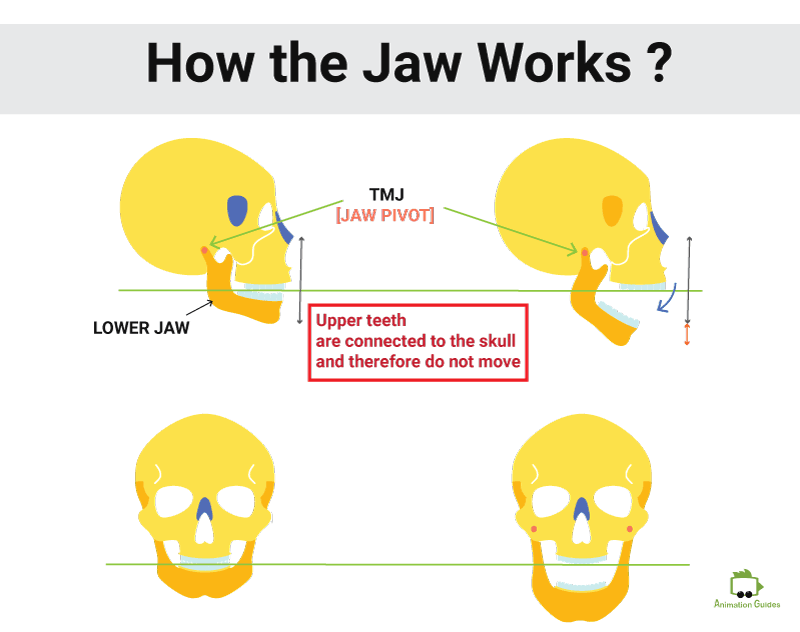

Step 2: Understanding How the Mouth Moves

Understanding how the mouth is constructed and the physical movement of the jaw will help in creating believable mouth shapes.

Jaw Movement

Upper Teeth

The upper teeth are connected to the skull and therefore never actually move.

Lower Teeth

The lower teeth are connected to the lower jaw and therefore rotate around the TMJ (which is a pivot point for the jaw) when the mouth opens and closes.

Upper Lip

The upper lip slides up and down while revealing or hiding the upper teeth. When the mouth is wide open the corners of the upper lip will stretch a little.

Lower Lip

The lower lip stretches as much as possible to wrap around the changing position of the lower teeth.

Skin and Wrinkles

The skin of the face stretches to match the new lower jaw position when the mouth opens. That usually creates recognizable creases under the nose.

Step 3: Define Which Mouth Shapes You Need to Create

The automated Lipsync rig in Adobe Character Animator allows you to insert 14 mouth shapes for automatic sound/expression recognition.

The software requires the layers to be named and structured in a very specific way to be automatically recognized by the software.

Standard Mouth Structure for Adobe Character Animator

- +Mouth

- Neutral

- Smile

- Surprised

- Aa

- Ee

- Oh

- Uh

- S

- D

- M

- R

- L

- F

- W-oo

Neutral (referred to as a closed mouth), Surprised, and Smile are the 3 mouth shapes that are recognized by the camera based on your mouth expression during the recordings.

11 more mouth shapes (also called visemes) are: M, S, D, Ee, Aa, Uh, Oo, R, W-oo, F, and L

Those 11 are activated based on the audio (and optionally transcript) in your scene.

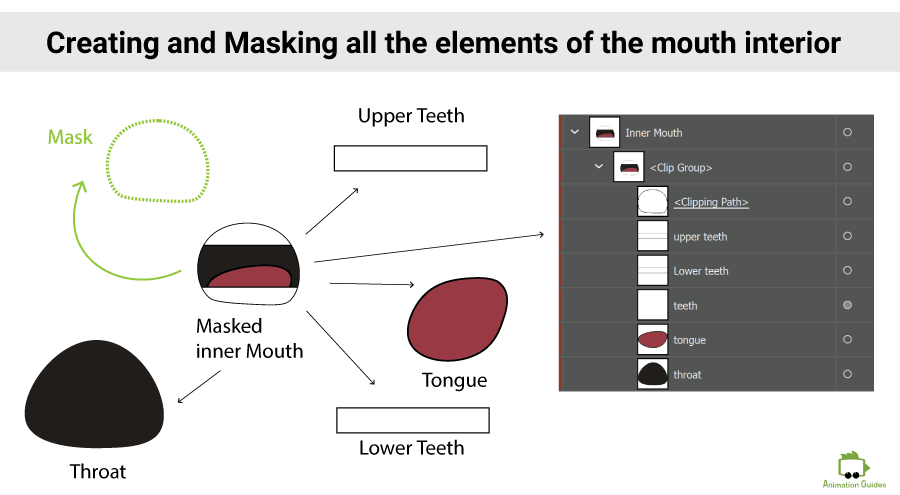

Step 4: Create and Mask the Inner Mouth Elements for All the Head Views

In step 1 we created the art for the lower and upper lips. That is enough to work with for Neutral, smile, and a few other expressions. However, many of the mouth shapes show some parts of an open mouth. Creating a full inner mouth will allow you to easily create an endless amount of expressions later on. Once all the elements are created it is just a matter of making a few simple adjustments for each mouth shape.

- 1. Upper Teeth (and in some cases gums)

- 2. Lower Teeth (and in some cases gums)

- 3. Tongue

- 4. Throat

All of the above elements will need to be inserted into a mask that will match the area between the upper and lower lip in each of the mouth shapes you create.

Let’s start with the Aa viseme.

First, we will need to adjust the upper and lower lips to form the Aa mouth shape. Then we will draw a shape between the open lips and mask the inner mouth to match that area.

Do it for all the head views you will be using in your animation.

Step 5: Draw All the Other Mouth Shapes

Now we will follow this process for the rest 12 mouth shapes we need to create.

For the mouth shapes that do not require showing teeth or tongue, we will hide the inner mouth sub-layer and will just adjust the art for the lips.

Creating the "Smile" Mouth Shape

Creating the "M" Mouth Shape

For all the other visemes we will always start by adjusting the lips. Then match the mask of the inner mouth to the area between the upper and lower lips. Then we will move the tongue and the lower teeth to match the mouth shape we are creating.

For a fluid and realistic lip sync It is important to make sure we do not move the upper teeth layer.

Importing to Ch

In the end, I usually turn all the mouth shapes into symbols and rename the layers before I import everything to Adobe Character Animator.

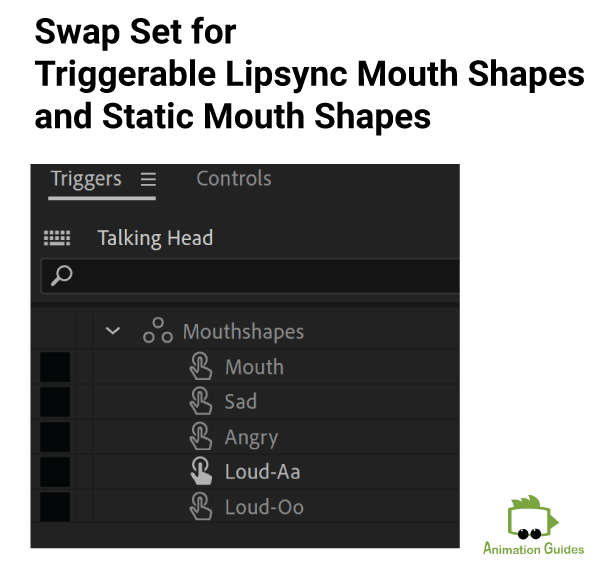

Step 6: Add Additional Mouth Shapes

You might want to create more mouth shapes to emphasize certain expressions. Or even have several mouth shapes for different sounds based on the tone those are pronounced in. You can do that by having a swap set with different mouth shapes and the Master Mouth group in it.

For example, you might want to have a mouth shape for a sad expression, an angry expression, shouting Aa, and shouting Oo.

Step 7: Create Additional Mouth Groups

In some cases having a regular mouth or even a regular mouth for lipsync and extra mouth shapes for expressions might not be enough. You might want to have an entire extra mouth group for a character talking in a sad tone, an angry tone, etc.

Each mouth group will contain all the required visemes.

- Mouths

- Mouth

- Neutral

- Smile

- Aa

- Uh

- L

- M

- F

- Oo

- Ee

- Surprised

- D

- S

- W-oo

- Sad Mouth

- Angry Mouth

- Happy Mouth

- Mouths

- Mouth

- Sad Mouth

- Neutral

- Smile

- Aa

- Uh

- L

- M

- F

- Oo

- Ee

- Surprised

- D

- S

- W-oo

- Angry Mouth

- Happy Mouth

- Mouths

- Mouth

- Sad Mouth

- Angry Mouth

- Neutral

- Smile

- Aa

- Uh

- L

- M

- F

- Oo

- Ee

- Surprised

- D

- S

- W-oo

- Happy Mouth

- Mouths

- Mouth

- Sad Mouth

- Angry Mouth

- Happy Mouth

- Neutral

- Smile

- Aa

- Uh

- L

- M

- F

- Oo

- Ee

- Surprised

- D

- S

- W-oo

Free Mouth Sets

There are few free mouths sets available online and can be used to create talking characters in Adobe Character Animator.

1. You can download a free mouth set from the Okay Samurai website. You will find the mouth template at the very bottom of the page. The mouth sets are available in .psd and .ai formats and are already structured and named properly to be used in Adobe Character Animator.

2. You can download the talking head example in this tutorial and the source .ai file with all the mouth shapes here.

Now What?

Now when your character is ready with all the required art for the phonemes. It is time to make it talk. Creating a lipsync from an audio file is a very simple process. You can read about the 5 steps to making a puppet talk here.

Stay tuned for new tutorials.

Shop Related Products:

- Facebook0

- Twitter0

- Pinterest1

- Email0

- Subscribe

- 1share

![X14 Drawing Tablet • PicassoTab Largest 14" Screen, Included Learning Package [Premium Drawing Apps & Tutorials] Stylus Pen, No Computer Needed, Standalone Graphics Tablet for Digital Artists -PX14](https://m.media-amazon.com/images/I/410bVXAxJbL._SS520_.jpg)

![How Small Changes in Walk Cycles Create Big Personality Shifts [Interactive Walk Cycle Simulator] walk simulator 3d](https://www.animationguides.com/wp-content/uploads/2025/04/walk-simulator-3d-250x250.jpg)

![Gil: Customizable Puppet for Adobe Character Animator [Advanced] customizable gil puppet for adobe character animator advanced rig](https://www.animationguides.com/wp-content/uploads/edd/2024/09/customizable-gil-puppet-for-adobe-character-animator-advanced-rig-250x250.png)